From 49b959b5408b97274e2ee423059d9239445aea26 Mon Sep 17 00:00:00 2001

From: Steven Liu <59462357+stevhliu@users.noreply.github.com>

Date: Fri, 3 May 2024 16:08:27 -0700

Subject: [PATCH] [docs] LCM (#7829)

* lcm

* lcm lora

* fix

* fix hfoption

* edits

---

docs/source/en/_toctree.yml | 6 +-

.../en/using-diffusers/inference_with_lcm.md | 465 ++++++++++++++++--

.../inference_with_lcm_lora.md | 422 ----------------

3 files changed, 413 insertions(+), 480 deletions(-)

delete mode 100644 docs/source/en/using-diffusers/inference_with_lcm_lora.md

diff --git a/docs/source/en/_toctree.yml b/docs/source/en/_toctree.yml

index f2755798b7..89af55ed2a 100644

--- a/docs/source/en/_toctree.yml

+++ b/docs/source/en/_toctree.yml

@@ -81,16 +81,14 @@

title: ControlNet

- local: using-diffusers/t2i_adapter

title: T2I-Adapter

+ - local: using-diffusers/inference_with_lcm

+ title: Latent Consistency Model

- local: using-diffusers/textual_inversion_inference

title: Textual inversion

- local: using-diffusers/shap-e

title: Shap-E

- local: using-diffusers/diffedit

title: DiffEdit

- - local: using-diffusers/inference_with_lcm_lora

- title: Latent Consistency Model-LoRA

- - local: using-diffusers/inference_with_lcm

- title: Latent Consistency Model

- local: using-diffusers/inference_with_tcd_lora

title: Trajectory Consistency Distillation-LoRA

- local: using-diffusers/svd

diff --git a/docs/source/en/using-diffusers/inference_with_lcm.md b/docs/source/en/using-diffusers/inference_with_lcm.md

index 798de67c65..19fb349c54 100644

--- a/docs/source/en/using-diffusers/inference_with_lcm.md

+++ b/docs/source/en/using-diffusers/inference_with_lcm.md

@@ -10,29 +10,30 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

specific language governing permissions and limitations under the License.

-->

-[[open-in-colab]]

-

# Latent Consistency Model

-Latent Consistency Models (LCM) enable quality image generation in typically 2-4 steps making it possible to use diffusion models in almost real-time settings.

+[[open-in-colab]]

-From the [official website](https://latent-consistency-models.github.io/):

+[Latent Consistency Models (LCMs)](https://hf.co/papers/2310.04378) enable fast high-quality image generation by directly predicting the reverse diffusion process in the latent rather than pixel space. In other words, LCMs try to predict the noiseless image from the noisy image in contrast to typical diffusion models that iteratively remove noise from the noisy image. By avoiding the iterative sampling process, LCMs are able to generate high-quality images in 2-4 steps instead of 20-30 steps.

-> LCMs can be distilled from any pre-trained Stable Diffusion (SD) in only 4,000 training steps (~32 A100 GPU Hours) for generating high quality 768 x 768 resolution images in 2~4 steps or even one step, significantly accelerating text-to-image generation. We employ LCM to distill the Dreamshaper-V7 version of SD in just 4,000 training iterations.

+LCMs are distilled from pretrained models which requires ~32 hours of A100 compute. To speed this up, [LCM-LoRAs](https://hf.co/papers/2311.05556) train a [LoRA adapter](https://huggingface.co/docs/peft/conceptual_guides/adapter#low-rank-adaptation-lora) which have much fewer parameters to train compared to the full model. The LCM-LoRA can be plugged into a diffusion model once it has been trained.

-For a more technical overview of LCMs, refer to [the paper](https://huggingface.co/papers/2310.04378).

+This guide will show you how to use LCMs and LCM-LoRAs for fast inference on tasks and how to use them with other adapters like ControlNet or T2I-Adapter.

-LCM distilled models are available for [stable-diffusion-v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5), [stable-diffusion-xl-base-1.0](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0), and the [SSD-1B](https://huggingface.co/segmind/SSD-1B) model. All the checkpoints can be found in this [collection](https://huggingface.co/collections/latent-consistency/latent-consistency-models-weights-654ce61a95edd6dffccef6a8).

-

-This guide shows how to perform inference with LCMs for

-- text-to-image

-- image-to-image

-- combined with style LoRAs

-- ControlNet/T2I-Adapter

+> [!TIP]

+> LCMs and LCM-LoRAs are available for Stable Diffusion v1.5, Stable Diffusion XL, and the SSD-1B model. You can find their checkpoints on the [Latent Consistency](https://hf.co/collections/latent-consistency/latent-consistency-models-weights-654ce61a95edd6dffccef6a8) Collections.

## Text-to-image

-You'll use the [`StableDiffusionXLPipeline`] pipeline with the [`LCMScheduler`] and then load the LCM-LoRA. Together with the LCM-LoRA and the scheduler, the pipeline enables a fast inference workflow, overcoming the slow iterative nature of diffusion models.

+

+

+

+To use LCMs, you need to load the LCM checkpoint for your supported model into [`UNet2DConditionModel`] and replace the scheduler with the [`LCMScheduler`]. Then you can use the pipeline as usual, and pass a text prompt to generate an image in just 4 steps.

+

+A couple of notes to keep in mind when using LCMs are:

+

+* Typically, batch size is doubled inside the pipeline for classifier-free guidance. But LCM applies guidance with guidance embeddings and doesn't need to double the batch size, which leads to faster inference. The downside is that negative prompts don't work with LCM because they don't have any effect on the denoising process.

+* The ideal range for `guidance_scale` is [3., 13.] because that is what the UNet was trained with. However, disabling `guidance_scale` with a value of 1.0 is also effective in most cases.

```python

from diffusers import StableDiffusionXLPipeline, UNet2DConditionModel, LCMScheduler

@@ -49,31 +50,69 @@ pipe = StableDiffusionXLPipeline.from_pretrained(

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

prompt = "Self-portrait oil painting, a beautiful cyborg with golden hair, 8k"

-

generator = torch.manual_seed(0)

image = pipe(

prompt=prompt, num_inference_steps=4, generator=generator, guidance_scale=8.0

).images[0]

+image

```

-

+

+

+

+

+

+

+

+

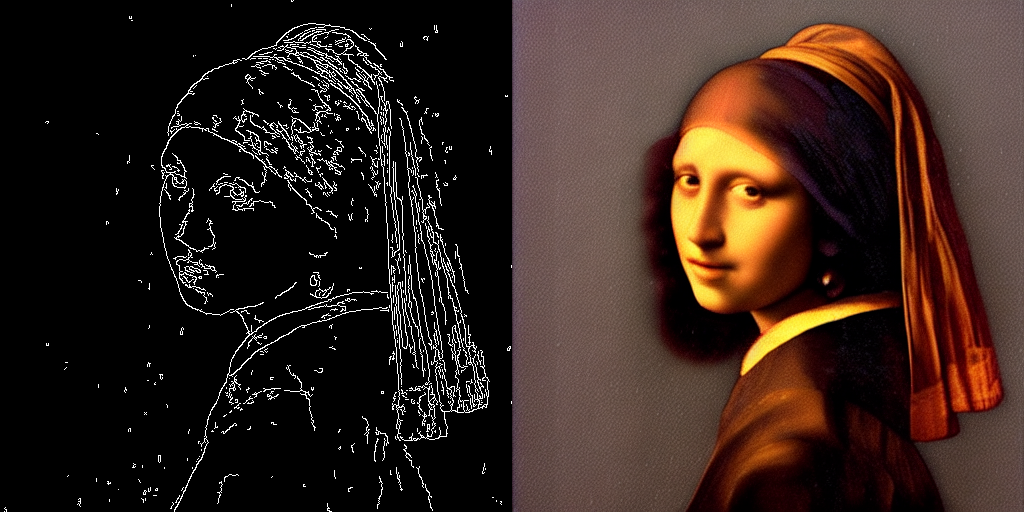

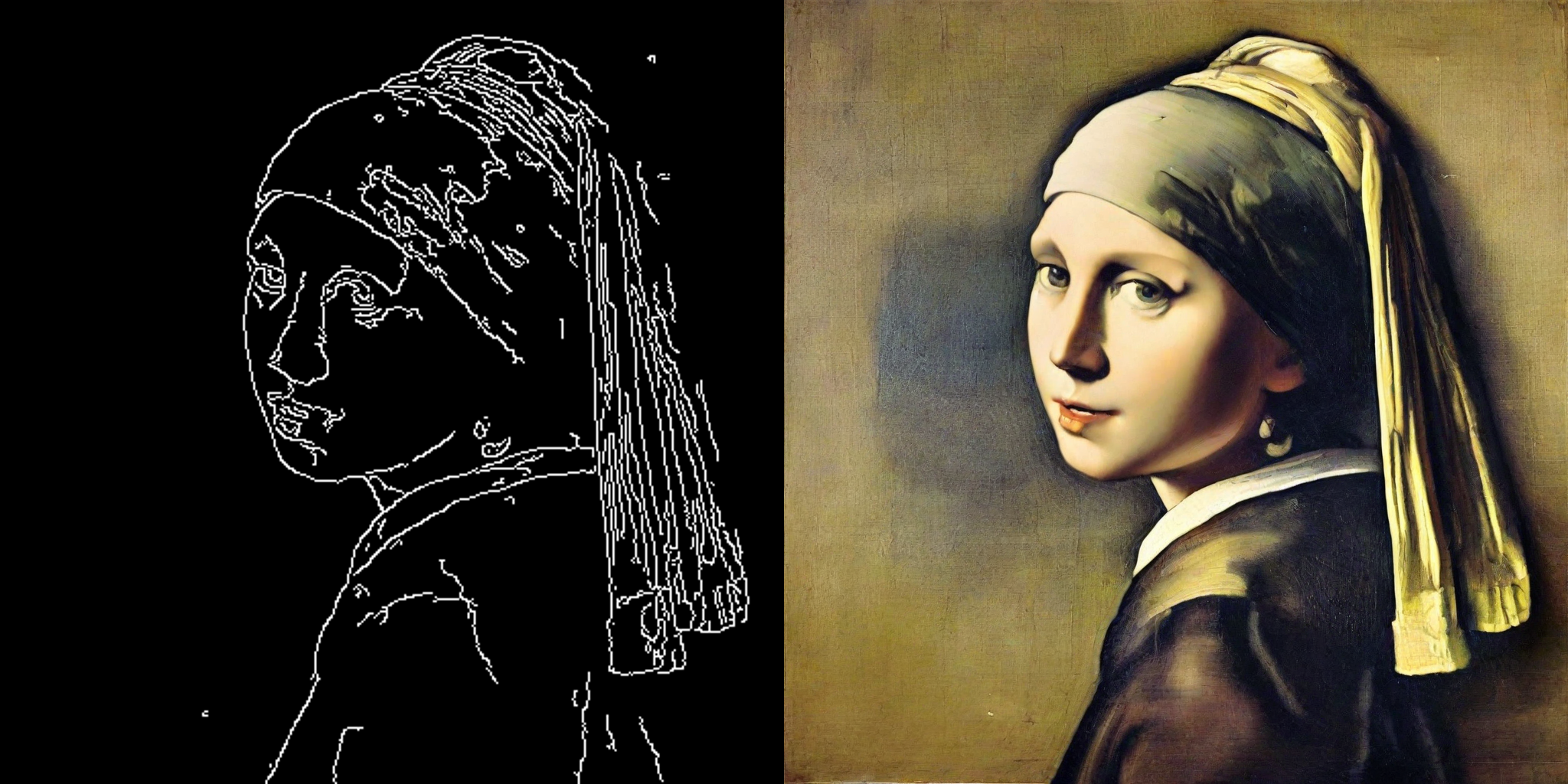

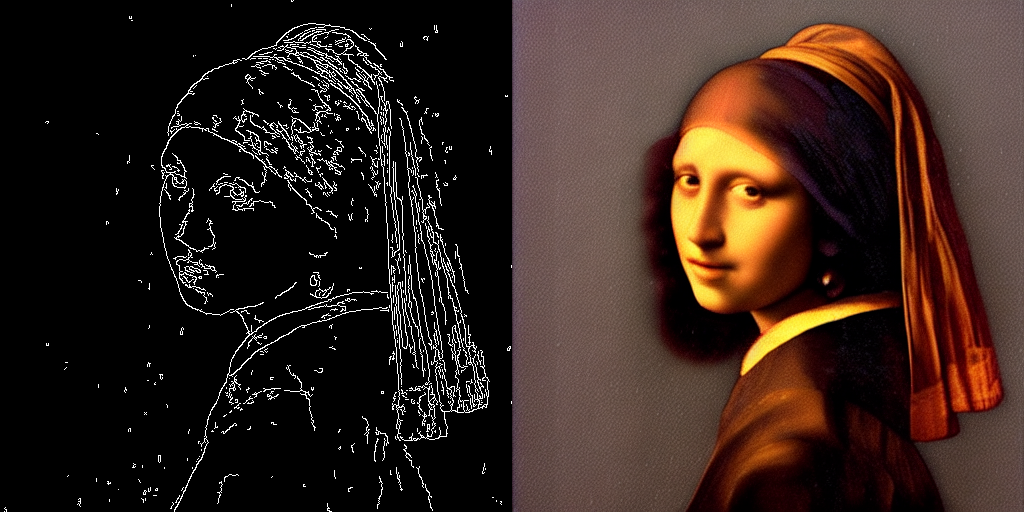

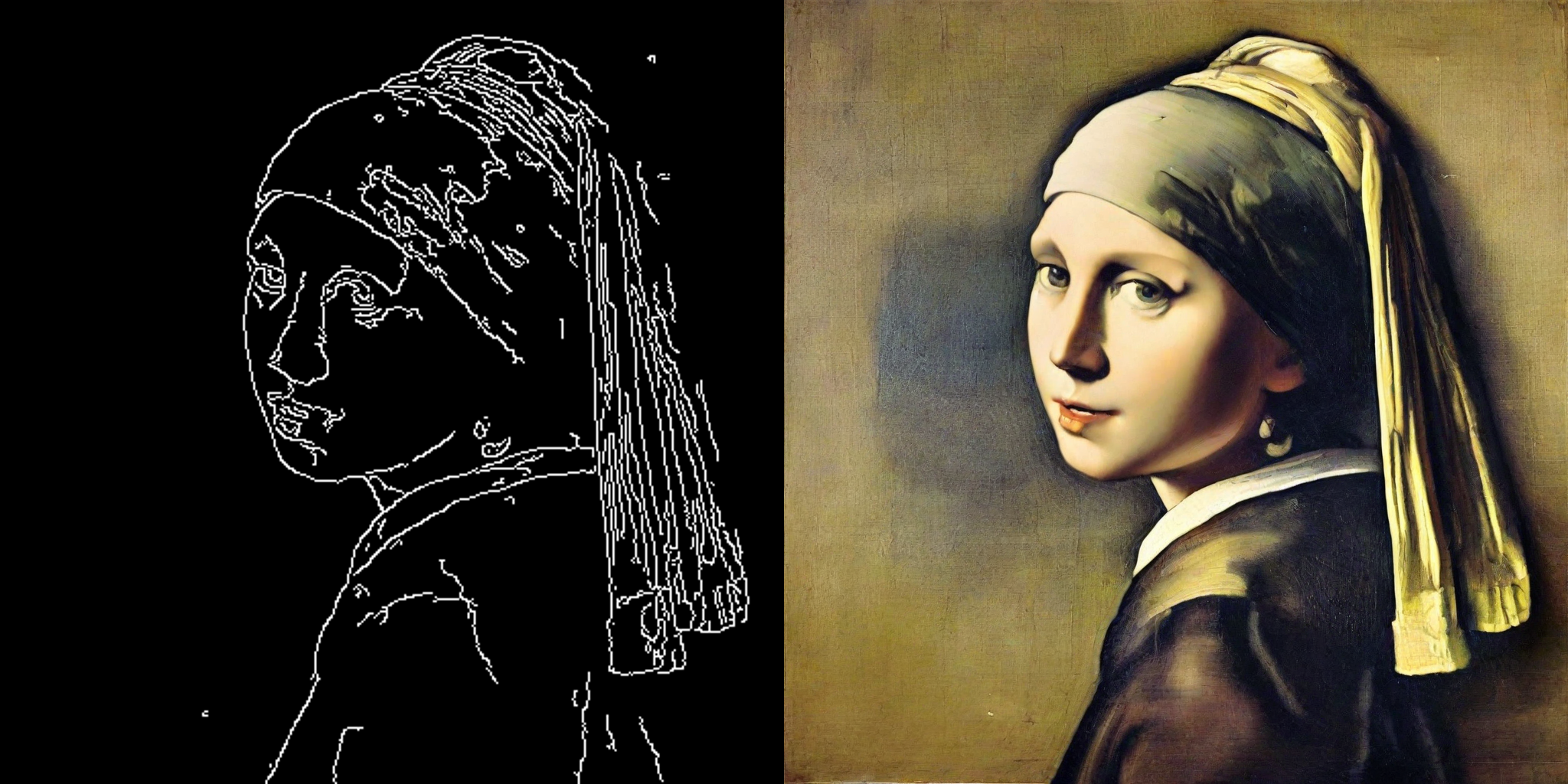

initial image

+

+

+

+

generated image

+

+

+

+

+

initial image

+

+

+

+

generated image

+

+

+

+

+

initial image

+

+

+

+

generated image

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+ +

+ +

+ +

+ +

+ +

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+ +

+ +

+ +

+ +

+ +

+